Ideally a log file should contain the exact amount of information you need to understand whether the system/application is running correctly or to find the causes of a bad functioning. Unfortunately, often we end up with less data than we need. There can be many reasons behind this:

- performances, because saving too many rows takes a lot of time;

- disk space, because systems don't have unlimited space to store files that are useless, in most of the cases;

- lack of knowledge of the future, you simply cannot put a log instruction before any other row, so you choose what to log depending on your experience.

What I usually do is to log in case something goes wrong (unable to open a file, bad inputs, etc.) and when there is some interaction from the outside (e.g. user commands, RPCs, etc.).

What Or Why?

Last week I had a brief discussion with my boss about the content of a good log file for a process doing lot of traffic over the serial port. He stated that, if you don't log every packet that goes in and out the wire, «what else should you log?»

I don't disagree with him in principle, but in this particular case, with a communication frame (request and answer) that lasts less than 300 milliseconds, you end up with a enormous amount of data that are nearly impossible to parse.

In this way, you will log "what" but not "why". In other words, even if you are able to catch a wrong packet, you have no indication of the reason why that message has been generated. And, in my experience, the majority of bugs hides in the "why" side.

Conclusions

Does my way of logging solve every problem? I don't think so. Every time you leave out some information from a log file, you are hiding potential issues. On the other hand, as said before, you cannot keep track of everything and, even if you do that, you have to consider the time spent on parsing an enormous file. In the end, it's just a matter of luck and experience.

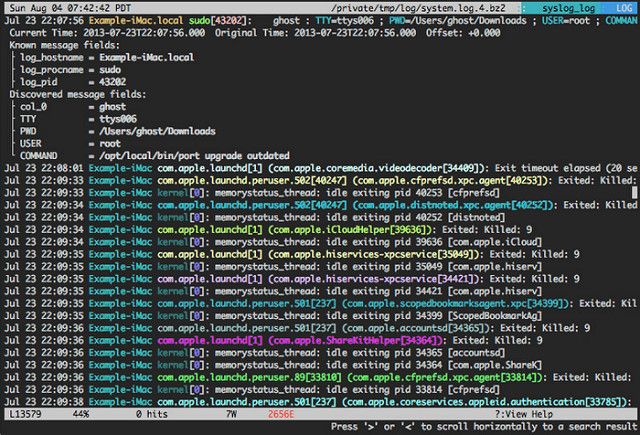

Image credits: Log Monitoring Tool - lnav by Linux Screenshots licensed under CCC BY 2.0

Post last updated on 2017/07/27